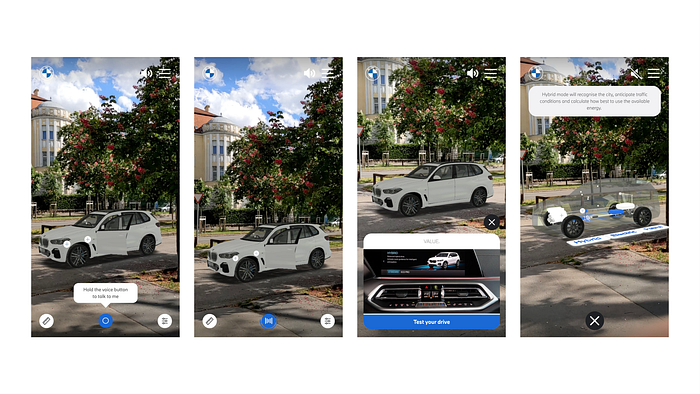

BMW Virtual Viewer is an augmented reality web application accompanied with an audible chatbot.

Speech recognition, text-to-audio, and more

Dialogflow is used to implement conversational user interface. It is a service, which recognizes the words said in an input audio file, what more, it matches it against pre-defined phrases, helping the developers convert audio wavelengths to user intents.

Intents

In a dialogflow project “An intent categorizes an end-user’s intention for one conversation turn”. It is a building block of a conversation. Since the application doesn’t have complex, multi-turn conversations, intents are used to recognize what the user would like to ask/command and responses to these questions. When an intent is matched, dialogflow returns some metadata (like intent matching confidence) and also a path to a sound file, which is the pre-defined textual response “read out loud” by the digital assistant. But how can an intent be configured, to be the winning match for certain phrases said by the user?

Training phrases

An intent can have multiple training phrases, such as “take a picture” or just “photo”. When an audio file is uploaded, dialogflow matches the recognized content to all the registered phrases and decides which intent fits the best. The matched intent is what we get back from dialogflow and act accordingly.

note: intents can be triggered using network endpoint calls as well, not just audio files that match the training phrases.

With the above introduced concepts, it becomes clear how dialogflow acts as a user interface. In a graphical user interface, the user communicates with the system by interacting with the visible components, such as clicking a button. So if there is a button with a camera shutter icon, it promotes the functionality of taking a picture/screenshot. Then when the user presses it, that event can directly be handled as a request to take a picture without any additional measures.

In a conversational user interface, dialogflow is the service which converts sound-wave information into “take a picture” intent, which is ingrained in the `onClick` event handler in a GUI.

Model-specific intents

One particular issue we had to solve during development was having dialogflow return model-specific responses. When the user asks “how far can it go with a full charge?” the “it” means the currently selected model. The chatbot should reply regarding the current model (X5 for example). What are the solutions to this problem?

- Dialogflow could know that the current model is the X5, this is known as the context of the conversation.

- when a model is selected, an event is fired on dialogflow whom output context is something like `X5Context`

- there are intents for all models related to same group with the proper input context

~ “the X1 can go x miles” (with `X1Context` input context)

~ “the 330E can go y miles” (with `330EContext` input context)

~ “the X5 can go up to z miles” (with `X5Context` input context)

2. There could be a generic intent, which has the training phrase (“how far can it go with full charge?”). When this intent is matched, the app should trigger a model specific intent which returns the final answer to the user’s question.

The first option requires a little more configuration, as all related intents should have the same training phrases, and the input contexts should be set.

The second option requires the application to have more knowledge and requires two requests to dialogflow. As opposed to the first option, here there has to be:

- user’s question sent up to dialogflow

<- generic intent return

2. model-specific intent triggered by the application

<- model-specific event response

Subtitles

As an accessibility feature, the application supports showing subtitles of what is currently being said by the assistant. Only shown when muted.

Dialogflow supports configuring the responses using Speech Synthesis Markup Language (SSML). An XML-based markup language, enabling features such as emphasis on certain parts of a sentence, or adding pauses. A supported tag by SSML is `<metadata>` in which information about the document can be placed. We used this element to store the subtitle information, as in the text-to-audio process, the content of this element is not being read out loud. As the dialogflow response returns the whole response SSML (not just the generated audio file) the application can extract the subtitles from it.

The format we came up with looks like this

“`

<speak>

The hybrid can go up to n miles.

<metadata>

<subtitles>

<text time=”1000″>The hybrid can go</text>

<pause time=”500″/>

<text time=”1000″>up to n miles.</text>

</subtitles>

</metadata>

</speak>

“`

In here, the `subtitles` `text` and `pause` elements are custom. These are parsed and eventually became a list of objects such as

“`

{

time: int,

content: string | null

}

“`

This data can be shown/sequenced on the UI, not only providing the experience in silence, which might be desired by some, but also a great accessibility feature.

Recap

This is the end of the third article, the first two can be found here:

Check out how a hybrid BMW looks in your own garage

Going on a virtual journey (BMW project, part 2)

We’ve covered many frontend related aspects of the application. How the experience and interaction are coming together by 8thWall and Dialogflow. How the flow is dictated by the journey.

It was quite an interesting experience.

Have a look at our social media